Primary interop — the foundational challenge of making diverse software systems communicate reliably — has a new answer in 2026, and that answer is the Model Context Protocol. For the first fifteen years of cloud software, interoperability was solved at the API layer: REST endpoints, JSON contracts, OAuth handshakes. That architecture served a world of human-paced, application-to-application communication. It was not designed for what enterprise AI demands in 2026 — autonomous agents that discover tools dynamically, chain tool calls across multiple systems in a single session, maintain stateful context across multi-turn interactions, and act faster than any human approval loop.

The numbers confirm the architectural shift is already underway. Enterprise MCP adoption has crossed 78% among production AI teams. The public MCP registry has surpassed 9,400 servers. The protocol has recorded more than 97 million SDK downloads. In April 2026 alone, the Agentic AI Foundation held the MCP Dev Summit North America in New York, drawing approximately 1,200 attendees. The protocol that Anthropic introduced in November 2024 and donated to the Linux Foundation in December 2025 has become the de facto primary interop standard for AI systems in less time than any previous developer protocol in the AI space.

This article explains why MCP has won the primary interop contest for AI systems, how it fits within the broader 2026 protocol ecosystem alongside A2A, ACP, and UCP, what enterprise architects need to understand about MCP gateway architecture, and what it actually costs to deploy — with pricing in both USD for US teams and GBP for UK teams.

→ [See our Enterprise AI Protocol Architecture and Integration Framework]

78% — Enterprise production AI teams have adopted MCP (Maxim AI 2026) 9,400+ — Servers in the public MCP registry as of May 2026 97M+ — MCP SDK downloads recorded by April 2026.

What Changed in Enterprise Interoperability — And Why MCP Is the Answer

The interoperability problem has existed since the first enterprise deployed two software systems that needed to share data. What changed in 2025 and 2026 is the nature of the consumer making the interoperability request. Legacy API integration was designed for deterministic software code — a developer writes a call to a specific endpoint, parses the response, handles the defined error codes. The consumer is a human-authored program with a fixed, predictable execution path.

AI agents are a categorically different consumer. They operate through probabilistic reasoning. They discover available capabilities dynamically rather than calling pre-coded endpoints. They chain tool calls across multiple systems within a single session without a human developer orchestrating each step. They maintain session state across multi-turn interactions that may span minutes or hours. They generate context that must persist between tool calls. And they can act — not just read — on enterprise systems at speeds and volumes that make per-request human review impractical.

“MCP addresses a growing demand for AI agents that are contextually aware and capable of pulling from diverse sources. It is the missing layer between what AI models can reason about and what enterprise systems can expose.” — The Verge, April 2026 — MCP Dev Summit coverage

The pre-MCP approach to AI interoperability was an N×M problem: every AI model required a custom connector for every enterprise tool. Ten models connecting to fifty tools meant five hundred custom integrations — each requiring separate maintenance, security review, and update cycles. MCP solves this with a universal standard: any MCP-compliant agent can connect to any MCP-compliant server. N+M integrations instead of N×M. The architectural simplification is not incremental — it is transformative for enterprise teams managing complex AI agent stacks.

How the 2026 AI Agent Protocol Stack Actually Works

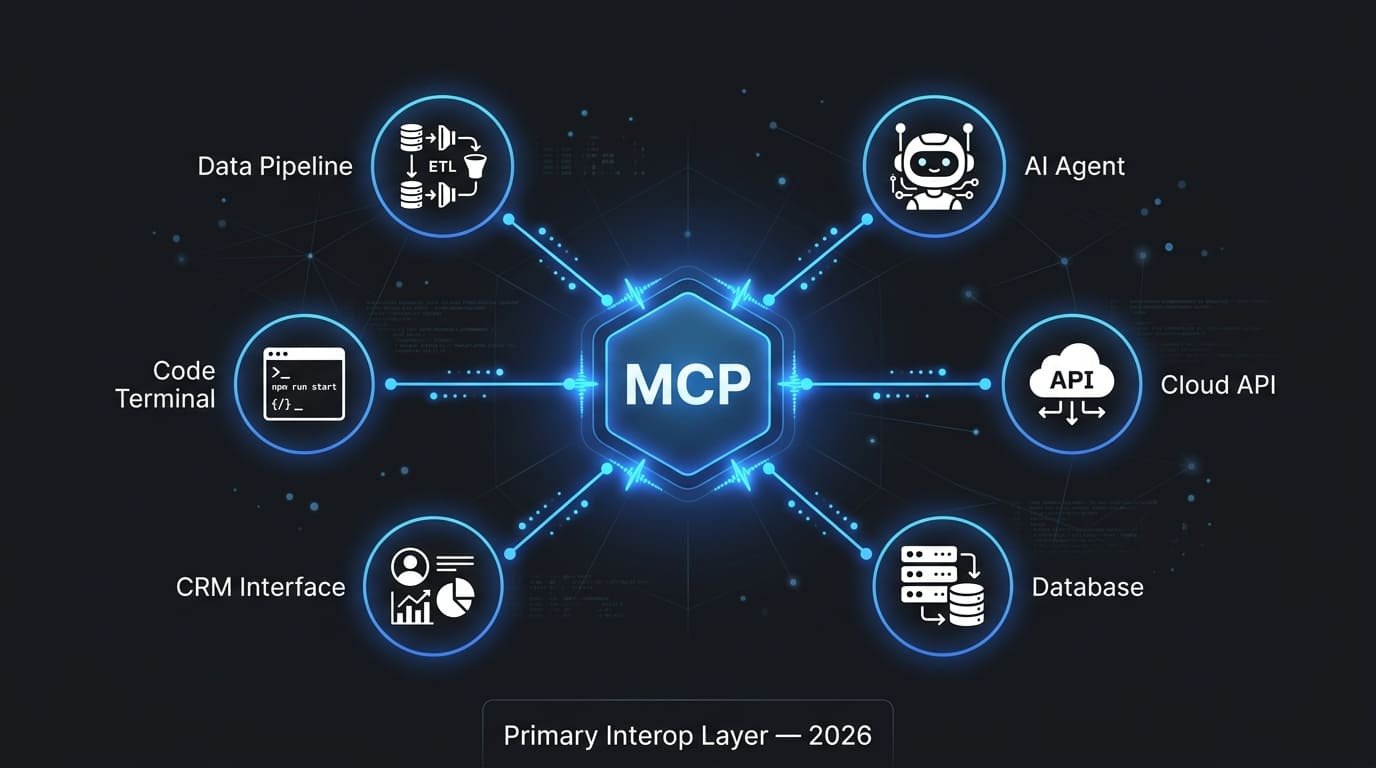

Understanding MCP’s role in primary interop requires understanding where it sits within the broader 2026 AI agent protocol ecosystem. The ecosystem has consolidated around four complementary protocols, each handling a distinct layer of agent communication. These protocols do not compete — they compose.

MCP — Agent → Tool Access (JSON-RPC 2.0 / HTTP+SSE) A2A — Agent → Agent Coordination (Agent Cards / HTTPS) ACP — Commerce Transactions (Open agent-to-agent) UCP — Google Commerce Layer (Google ecosystem native)

MCP — The Primary Interop Layer

MCP is the primary interop layer — the protocol that handles how AI agents connect to external tools, APIs, and data sources. Introduced by Anthropic in November 2024, donated to the Linux Foundation’s Agentic AI Foundation in December 2025, and now maintained as an open standard with co-founders Anthropic, Block, and OpenAI, MCP has achieved the cross-vendor adoption that makes it genuinely primary rather than vendor-specific.

The architecture is clean: an MCP Host (the application environment — Claude, VS Code, an enterprise agent platform), an MCP Client embedded in the host, and MCP Servers that expose tools, resources, and prompts. Communication runs over JSON-RPC 2.0, transported via HTTP with Server-Sent Events for streaming or stdio for local development. The stateful session model is what differentiates MCP from REST APIs — the server maintains state about available tools, active resources, and in-progress operations across the agent’s full interaction lifecycle.

A2A — Agent-to-Agent Coordination

Where MCP handles agent-to-tool connections, A2A handles agent-to-agent coordination. Google’s Agent-to-Agent protocol enables different AI agents — potentially from different vendors — to discover each other’s capabilities and delegate tasks through Agent Cards and structured messaging. A2A launched with 50+ partners and is now part of the Microsoft Agent Framework 1.0 GA release of April 2026. For enterprise teams building multi-agent systems, MCP and A2A are complementary: MCP provides the tool access foundation, A2A provides the inter-agent coordination layer on top of it.

ACP and UCP — Commerce Layer

The Agent Commerce Protocol from IBM and the Linux Foundation handles open agent-to-agent commerce transactions — payment, fulfilment, and transaction semantics. Google’s Universal Commerce Protocol (UCP) covers equivalent ground within the Google ecosystem. Both are early-stage relative to MCP and A2A, relevant primarily to enterprise teams whose AI agents need to execute autonomous commercial transactions. The sequencing recommendation from Digital Applied’s April 2026 protocol ecosystem map is clear: establish MCP first, add A2A for multi-agent coordination, evaluate commerce protocols only when autonomous transacting is a genuine operational requirement.

Primary Interop Decision: MCP Server vs API Gateway — A Direct Comparison

The most common architectural question enterprise teams face when evaluating MCP is whether it replaces their existing API gateway infrastructure. The answer — consistent across API7.ai, TrueFoundry, VMware Tanzu, and The New Stack’s March 2026 analysis — is that MCP and API gateways serve different primary consumers and solve different primary problems. They are not interchangeable. In most mature enterprise AI architectures in 2026, you need both.

| Dimension | API Gateway | MCP Server | Best Fit |

|---|---|---|---|

| Primary Consumer | Applications — web, mobile, service-to-service | AI agents — LLMs, autonomous agents, agentic workflows | Each has its own |

| Session Model | Stateless — each request independent | Stateful — session persists across multi-turn interactions | MCP for agents |

| Tool Discovery | Static — developer pre-codes endpoint calls | Dynamic — agent discovers available tools at runtime | MCP for AI |

| Context Handling | Request/response — no cross-call context | Context-aware — server maintains state across calls | MCP for agents |

| Transport | HTTP REST, GraphQL, gRPC | HTTP + SSE for streaming, stdio for local | MCP for streaming |

| Auth Model | API keys, OAuth, JWT — well established | OAuth 2.1, OIDC, dynamic client registration | Both mature |

| Observability | Mature — decades of tooling | Emerging — MCP gateway layer required for enterprise | API Gateway today |

| Enterprise Maturity | Very high — Kong, Apigee, AWS API GW | Maturing rapidly — 78% adoption, gateway ecosystem growing | API Gateway today |

| AI Workflow Fit | Poor — stateless model mismatch for agents | Purpose-built — designed for agentic interaction patterns | MCP clearly |

The practical architecture: your web application calls your REST API through the API gateway. Your AI assistant embedded in the same application uses MCP servers. Same underlying enterprise data and systems — different interfaces for different consumers. As VMware Tanzu’s May 2026 analysis puts it directly: APIs are for services; MCP is for LLMs. In many enterprise deployments you will need both, and a unified gateway that handles both traffic types on a single control plane is the most operationally efficient approach.

→ [VMware Tanzu — MCP vs APIs: Why You Need Both — blogs.vmware.com/tanzu]

Primary Interop Investment: MCP Gateway Pricing for US and UK Enterprise Teams

The MCP gateway market has matured rapidly in 2026, with five distinct enterprise solutions differentiated by deployment model, governance depth, and pricing architecture. The following pricing overview covers the leading options with costs in both USD (for US teams) and GBP (for UK teams), reflecting current market rates. Exchange rate reference: £1 ≈ $1.27 as of May 2026.

| MCP Gateway | US Pricing (USD) | UK Pricing (GBP) | Deployment Model |

|---|---|---|---|

| Bifrost (Open Source) | Free — self-hosted | Free — self-hosted | Open source; Docker / NPX; in-VPC option |

| Bifrost (Enterprise) | Custom — contact sales | Custom — contact sales | Clustering, Vault integration, enterprise SLA |

| Kong AI Gateway | Starts ~$250/month | Starts ~£197/month | Enterprise: Kong Konnect — hybrid cloud or self-hosted |

| Cloudflare MCP | $20/month (Workers Pro) | £16/month (Workers Pro) | Edge-native; Cloudflare One stack required for full feature set |

| MintMCP (Managed) | $49–$299/month | £39–£236/month | SOC 2 Type II certified managed gateway; STDIO to HTTP/SSE |

| Apigee (Google Cloud) | From $560/month | From £441/month | Google Cloud-native; zero-code MCP from API spec; steep GCP lock-in |

| Tyk AI Studio | Free (open source CE) | Free (open source CE) | Open-sourced March 2026; Go-based; MCP toolchain plugin support |

Pricing note for UK teams: VAT at 20% applies to software subscriptions from non-UK vendors under the UK’s digital services tax framework — factor this into TCO calculations for Cloudflare, Kong, Apigee, and managed MCP gateway subscriptions. US teams should note that AWS, Azure, and GCP infrastructure costs for self-hosted MCP gateway deployments add approximately $80–$400 per month depending on instance size and redundancy configuration.

“The right MCP gateway centralizes authentication, enforces tool-level access policies, captures audit trails, and routes traffic through a single governed control plane. Without it, enterprises end up with scattered credentials, no telemetry, and zero visibility into what agents are doing.” — Maxim AI — Top 5 Enterprise MCP Gateway Solutions, May 2026

The Digital Applied protocol ecosystem map’s sequencing guidance is the most operationally grounded framework for enterprise MCP adoption: start with MCP to establish the tool access foundation, then add A2A when multi-agent coordination becomes necessary, then evaluate commerce protocols only when autonomous transacting is a genuine business requirement.

Phase 1 — Foundation: Build Your MCP Tool Registry

Audit every tool, API, and data source your AI workflows currently access through custom integrations. For each one, build an MCP server — or adopt a community-maintained server if one exists in the 9,400+ server registry. This single investment applies across all current and future agent frameworks that support MCP. The upfront effort is real: an MCP server for a complex enterprise system like Salesforce or SAP requires two to four weeks of engineering. For simpler REST APIs, Apigee’s zero-code MCP generation from OpenAPI specs reduces this to hours. UK teams: factor in IR35 contractor classification if using freelance engineers for MCP server development, as this affects billing rates typically ranging from £450–£850 per day for senior AI engineers.

Phase 2 — Control Plane: Deploy an MCP Gateway

Before connecting any MCP server to a production AI agent, deploy an MCP gateway as your control plane. For teams new to MCP, Bifrost’s open-source Docker deployment (operational in 30 seconds) provides the fastest path to a governed MCP environment without cost commitment. For regulated industries in the US — financial services, healthcare, federal — MintMCP’s SOC 2 Type II managed gateway eliminates the compliance buildout overhead. For UK financial services firms under FCA digital operational resilience guidelines, a managed gateway with immutable audit trails satisfies the AI tool-use audit requirements without bespoke development.

Phase 3 — Agent Integration: Connect Your First Agent

Connect your highest-value agent use case to the MCP gateway first. Define the tool allow-list for this agent — the specific MCP servers and tools it may call — and configure the access control policy in your gateway. Do not give any agent access to all available MCP servers until it has demonstrated stable, audited behaviour with a scoped allow-list. The MCP gateway’s per-key tool filtering capability is the enforcement mechanism — every agent receives a virtual key that maps to its approved tool set.

Phase 4 — Observability and Governance

Activate OpenTelemetry tracing, audit logging, and anomaly detection before expanding MCP usage beyond the initial agent. The audit trail requirement is not optional for enterprise deployments in regulated environments — UK GDPR Article 30 requires records of processing activities, which extends to AI agent tool-use logs where personal data is accessed. US teams in HIPAA-covered entities must ensure MCP tool calls accessing patient data are logged with the same rigour as direct system access.

Phase 5 — Scale and Multi-Agent Coordination

Once MCP infrastructure is stable and governed for your initial agent, design your multi-agent topology and implement A2A coordination for workflows requiring agent-to-agent task delegation. The Accenture research cited in the 2026 MCP-A2A analysis is striking: companies with highly interoperable applications grew revenues approximately six times faster than non-interoperable peers. The revenue case for primary interop investment is not theoretical — it is now empirically grounded.

Conclusion: Primary Interop Is the Strategic Infrastructure Investment of 2026

Primary interop in 2026 is an MCP question because the primary consumer of enterprise integrations has changed. Applications still call APIs. AI agents call MCP servers. Both are necessary. Neither replaces the other. But the enterprise that has not built its MCP infrastructure is operating its AI agents against a backdrop of brittle, N×M custom integrations that will break as the agent count grows, the tool count expands, and the governance requirements tighten.

The 78% adoption rate among production AI teams is not a trailing indicator — it is the evidence that the market has already decided. The question for the remaining 22% is not whether MCP is the right primary interop standard. The question is how much of the competitive and governance gap they can afford to widen before they begin building.

Immediate actions for enterprise architects and technology leaders:

- Audit your current AI agent integrations this week — count the custom connectors in production and calculate the maintenance overhead they carry.

- Evaluate MCP servers for your three highest-value enterprise tools — check the public registry before building bespoke servers.

- Deploy Bifrost or Tyk AI Studio (both free, open source) in a sandbox this sprint — operational experience with MCP gateway architecture accelerates every subsequent decision.

- Define your MCP tool allow-list policy before connecting any production agent to any MCP server — the governance architecture must precede the capability deployment.

- For UK teams: assess FCA digital operational resilience and UK GDPR Article 30 requirements for AI agent tool-use logging before selecting your MCP gateway — managed solutions with pre-built compliance features reduce regulatory buildout cost materially.

- Sequence your protocol investments: MCP first, A2A when multi-agent coordination is required, commerce protocols only when autonomous transacting is operationally necessary.

The companies building durable AI systems in 2026 are no longer optimising prompts. They are optimising orchestration, interoperability, and recovery. Primary interop — built on MCP — is the infrastructure layer that makes the rest of that optimisation compound.

Frequently Asked Questions (FAQs)

Q1: What is primary interop in the context of enterprise AI and MCP?

Primary interop refers to the foundational interoperability layer that enables diverse AI systems — agents, models, tools, and enterprise applications — to communicate reliably without custom-built connectors for every pairing. In 2026, primary interop for AI systems is answered by the Model Context Protocol (MCP), an open standard donated to the Linux Foundation’s Agentic AI Foundation in December 2025. MCP provides a universal interface for AI agents to connect to any MCP-compliant tool, API, or data source, solving the N×M custom integration problem that previously required a separate connector for every model-tool combination.

Q2: How does MCP differ from a traditional API gateway?

API gateways and MCP servers serve different primary consumers. API gateways handle stateless request-response communication for applications — web frontends, mobile clients, service-to-service calls. MCP handles stateful, multi-turn, context-aware communication for AI agents. The fundamental architectural difference is session state: API gateways treat each HTTP request as independent; MCP servers maintain persistent session state across multi-tool-call agent interactions. In most mature enterprise AI architectures in 2026, you need both: an API gateway for application traffic and an MCP gateway for AI agent traffic, potentially unified on a single platform like Kong or Apache APISIX.

Q3: What is the difference between MCP, A2A, ACP, and UCP?

The four protocols serve distinct layers of AI agent communication. MCP (Model Context Protocol) handles agent-to-tool connections — how agents access external tools, APIs, and data. A2A (Agent-to-Agent, Google) handles agent-to-agent coordination — how agents from different vendors discover each other’s capabilities and delegate tasks. ACP (Agent Commerce Protocol, IBM and Linux Foundation) handles open agent-to-agent commerce transactions. UCP (Universal Commerce Protocol, Google) handles commerce transactions within the Google ecosystem. They are complementary, not competing: a complete enterprise agent stack uses all relevant protocols simultaneously, each handling its designated communication type.

Q4: How much does enterprise MCP gateway deployment cost in the US and UK?

Open-source options including Bifrost and Tyk AI Studio are free to self-host, with infrastructure costs of approximately $80–$400 per month (£63–£315/month) depending on configuration. Managed solutions range from MintMCP at $49–$299/month (£39–£236/month) with SOC 2 Type II certification, to Kong AI Gateway from approximately $250/month (£197/month), to Apigee from $560/month (£441/month) for Google Cloud-native deployments. UK teams should factor 20% VAT on software subscriptions from non-UK vendors and IR35 implications for contract MCP server development engineers at £450–£850 per day. Total enterprise deployment costs for a governed, production-grade MCP stack typically run $2,000–$8,000 per month ($1,575–$6,300/month equivalent in GBP) for mid-scale deployments.

Q5: Why has MCP adoption reached 78% among production AI teams so quickly?

MCP solved a genuine and urgent problem — the N×M custom integration burden — with a simple, open, well-documented specification. Its adoption velocity was accelerated by four factors: Anthropic’s decision to donate it to the Linux Foundation made it genuinely vendor-neutral; OpenAI and Google DeepMind adopted it immediately, giving it cross-vendor legitimacy; the developer community built 9,400+ servers rapidly because the specification is straightforward to implement; and the agentic AI deployment wave of 2025 created immediate demand for exactly the problem MCP solves. No previous AI developer protocol has achieved comparable adoption velocity.

Q6: What security risks should enterprises know about MCP?

Security researchers identified multiple MCP security risks in April 2025 that remain relevant in 2026: prompt injection attacks through maliciously crafted tool responses, tool permission combinations that allow data exfiltration across server boundaries, lookalike tools that silently replace trusted ones (tool poisoning), and credential theft through misconfigured MCP server access. The 2026 joint cybersecurity guidance on agentic AI specifies that MCP agents must receive dedicated non-human identities with bounded permissions and revocable credentials. An MCP gateway with tool-level allow-lists, per-key access controls, and real-time anomaly detection addresses the most significant attack vectors. Lasso’s security-first MCP gateway provides active threat detection specifically targeting these AI attack vectors for organisations in security-sensitive environments.

Q7: When should an enterprise start with MCP versus A2A?

Start with MCP. It establishes the tool access foundation that all other agent capabilities depend on. A2A adds value once you have multiple agents that need to coordinate tasks — typically in Phase 2 or Phase 3 of agentic AI deployment maturity. The sequencing is not arbitrary: A2A coordination between agents is only useful if the agents themselves have reliable, governed access to the tools they need. Building A2A coordination before MCP tool access is stable creates orchestration infrastructure that has nothing governed to orchestrate. The Digital Applied protocol ecosystem map’s 2026 sequencing recommendation is explicit: MCP first, A2A when multi-agent coordination becomes necessary.

Q8: How do UK enterprises need to adapt MCP deployment for regulatory compliance?

UK enterprises face several regulatory considerations specific to MCP deployments. UK GDPR Article 30 records of processing requirements apply to AI agent tool-use logs where personal data is accessed through MCP servers — an MCP gateway’s immutable audit trail satisfies this requirement. FCA digital operational resilience guidelines for financial services firms require AI tool-use to be auditable and recoverable — managed MCP gateways with SOC 2 or ISO 27001 certification reduce compliance buildout overhead significantly. ICO guidance on automated decision-making (derived from UK GDPR Article 22) applies where AI agents make or substantially influence decisions affecting individuals — tool-call logging and human oversight mechanisms are required. UK teams should also note that post-Brexit, UK standard contractual clauses rather than EU SCCs govern international data transfers through cloud-hosted MCP gateway infrastructure.

About the Author Waqas Raza

Waqas Raza is a Technical SEO Specialist and Digital Strategist with a focus on B2B SaaS architecture. He writes for enterprise technology leaders, AI architects, and engineering teams building production-grade agentic AI systems for US, UK, and global enterprise environments. vitaloralife.com

vitaloralife.com | Published for US, UK & Global Audiences | © 2026 Waqas Raza