The debate of API gateway vs MCP server is now a boardroom conversation, not just a backend engineering decision. As enterprises accelerate their deployment of agentic AI systems, the question of which infrastructure layer to route through — a battle-tested API gateway or an emerging Model Context Protocol (MCP) server — carries real cost, latency, and competitive consequences.

This guide gives CTOs, solution architects, and technical founders a clear, evidence-based framework for making that choice in 2026.

[INTERNAL LINK: See our guide → What Is Agentic AI? A Practical Enterprise Overview]

What Is an API Gateway? (And Why It Still Dominates Enterprise Infrastructure)

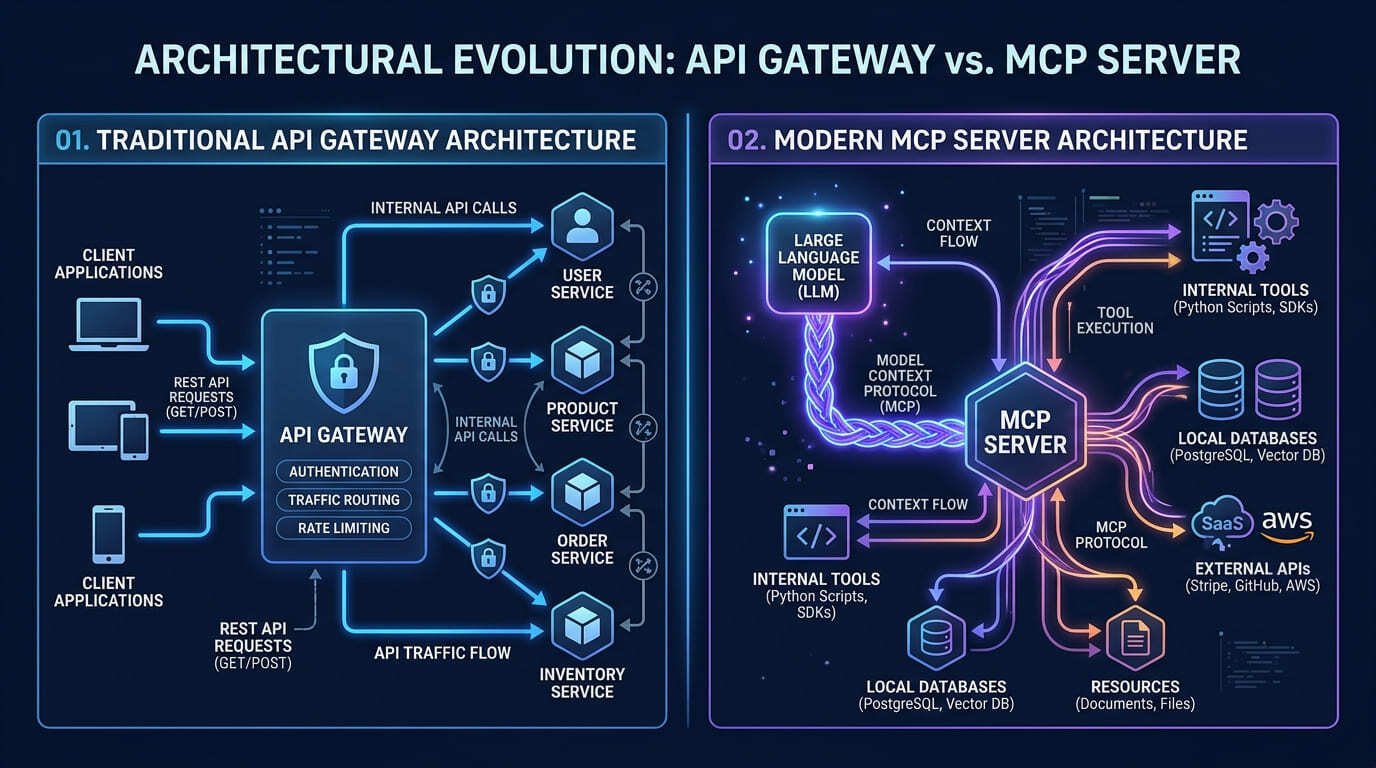

An API gateway is a managed reverse proxy that sits between clients and backend microservices. It handles authentication, rate limiting, load balancing, caching, and traffic routing — all without the client needing to interact with individual services directly.

Common enterprise API gateways include:

- AWS API Gateway (starting at $3.50/million API calls in the US)

- Kong Gateway (open-source core; enterprise tier from ~$50,000/year in the UK)

- Azure API Management (£0.026/10,000 calls on the Consumption tier in the UK)

- Google Cloud Apigee (enterprise contracts typically $40,000–$120,000/year USD)

API gateways have been the backbone of SaaS infrastructure for over a decade. According to Gartner’s API Strategy Report 2025, more than 80% of enterprise integrations still flow through an API gateway layer.

Their strength is well-established: deterministic routing, mature security tooling (OAuth 2.0, JWT, mTLS), and deep observability integrations with tools like Datadog, Splunk, and CloudWatch.

Limitations for AI workloads:

- Designed for stateless HTTP/REST — not for multi-step, context-rich LLM conversations

- No native support for tool calling, memory retrieval, or agent context passing

- Latency overhead per-hop adds up across multi-agent orchestration chains

- Cannot dynamically expose tools or capabilities to a language model at runtime

[Read more → Microservices vs Agentic AI Systems: Architecture Comparison]

H2: What Is an MCP Server? The Architecture Built for AI-First Applications

The Model Context Protocol (MCP), introduced by Anthropic in late 2024 and rapidly adopted by OpenAI, Google DeepMind, and Microsoft, is a standardised open protocol that enables language models to securely access tools, data sources, and APIs in real time.

An MCP server acts as the middleware between an LLM and its external capabilities — exposing structured “tools” (functions the model can call), “resources” (data it can read), and “prompts” (templates it can invoke) through a consistent interface.

How MCP Server differs architecturally:

| Dimension | API Gateway | MCP Server |

|---|---|---|

| Primary protocol | HTTP / REST / GraphQL | JSON-RPC over stdio / SSE / HTTP |

| Primary consumer | Any client application | Language models (LLMs / AI agents) |

| Context handling | Stateless per request | Stateful, context-aware |

| Tool discovery | Manual SDK integration | Dynamic, runtime tool exposure |

| Security model | Network perimeter + auth headers | Scoped capability grants per session |

| AI-readiness | Retrofit required | Native |

| Typical cost (self-hosted) | Infrastructure only | Infrastructure only |

| Typical cost (managed) | $3.50–$120,000+/year | Nascent market; early-stage pricing |

MCP servers are purpose-built for the way LLMs consume information: iteratively, contextually, and with structured tool access. They eliminate the translation layer that developers currently build manually between API responses and LLM prompts.

Key MCP Server capabilities:

- Dynamic tool registration (add a new database connection without redeploying)

- Session-level context persistence across multi-turn agent interactions

- Fine-grained capability scoping — an agent gets only the tools it needs for a task

- Natively compatible with Claude, GPT-4o, Gemini, and other major frontier models

API Gateway vs MCP Server — Head-to-Head Architecture Comparison

Performance and Latency

API gateways introduce 10–50ms of additional latency per hop due to authentication checks, logging, and rate-limit evaluation. In a human-facing REST API, this is negligible.

In a multi-agent orchestration chain where agents call 8–15 tools per task cycle, that overhead compounds. A five-agent pipeline with three gateway hops per agent can add 1,200–2,250ms of pure routing overhead — degrading the user experience and increasing LLM token costs due to extended context windows.

MCP servers, operating closer to the model layer, reduce routing overhead by eliminating redundant auth re-evaluation for tool calls within the same session.

Security Architecture

API gateways enforce security at the network perimeter: TLS termination, OAuth scopes, IP allowlisting, and JWT validation.

MCP servers enforce security at the capability level: a session receives a scoped set of tools and resources it is authorised to access. This aligns with NIST’s AI Risk Management Framework (AI RMF), which recommends capability-level controls for autonomous AI systems.

For EU-regulated enterprises operating under the AI Act and GDPR, MCP’s granular permission model provides a stronger audit trail for automated decision-making — a compliance requirement under Article 22 of GDPR.

Developer Experience

| Task | API Gateway | MCP Server |

|---|---|---|

| Add a new data source | Write adapter + deploy + document endpoint | Register new resource in MCP config |

| Grant agent access to a tool | Modify OAuth scopes + re-deploy | Add tool to session capability list |

| Debug an agent interaction | Reconstruct from logs | Full session context available natively |

| Onboard a new LLM provider | API version compatibility work | Protocol-native; works across providers |

API Gateway vs MCP Server — Which Should Your Enterprise Choose?

This is not a binary choice for most enterprise architectures. The answer depends on your primary workload.

Choose an API Gateway when:

- Your core product is a SaaS application consumed by human users via web or mobile

- Your integrations are primarily service-to-service, not model-to-tool

- You require mature compliance certifications (SOC 2, ISO 27001, FedRAMP) that managed API gateways already carry

- Your team has deep REST/GraphQL expertise and no immediate agentic AI workloads

Choose an MCP Server when:

- You are building agentic AI features where LLMs need to call tools, read data, or take actions

- Your AI agents need dynamic capability discovery without manual SDK maintenance

- You need session-level context persistence across multi-step agent tasks

- You are targeting compliance-conscious EU/UK markets and need capability-level audit trails

Build a Hybrid Architecture when:

- Your product has both human-facing API consumers and AI agent workloads

- You route human traffic through the API gateway and agent tool calls through MCP

- You need the security hardening of an API gateway for external traffic plus the context-native efficiency of MCP for internal agent operations

According to a16z’s 2025 AI Infrastructure Report, over 60% of enterprise AI teams building agentic systems are already planning a hybrid API gateway + MCP architecture for production deployments in 2026.

Migration Path: Moving from API Gateway to MCP Server for AI Workloads

Enterprises do not need to rip and replace. A practical migration path:

- Audit your current API gateway usage — identify which endpoints are consumed by AI agents vs human-facing clients

- Wrap agent-facing endpoints as MCP tools — expose them through an MCP server layer without touching the underlying service

- Run parallel for 30–60 days — validate latency, security, and capability coverage before deprecating agent-specific gateway routes

- Adopt bounded autonomy controls — implement session-level capability scoping on the MCP layer from day one

- Instrument observability — add LLM-native monitoring (LangSmith, Helicone, or OpenTelemetry-compatible tools) alongside your existing gateway metrics

This phased approach protects existing investments while enabling your AI teams to build on a protocol designed for the way models actually work.

Conclusion: The Architecture Decision That Will Define Your AI Roadmap

The API gateway vs MCP server decision is ultimately a question of who — or what — is consuming your infrastructure. API gateways were built for humans and service meshes. MCP servers were built for language models and autonomous agents.

For enterprises investing in agentic AI in 2026, MCP is not a replacement for your API gateway — it is an additional layer purpose-built for the model layer. The organisations that architect both correctly, routing the right traffic through the right layer, will deploy faster, spend less on token overhead, and maintain stronger compliance posture than those retrofitting legacy gateway configurations for AI workloads.

Immediate action steps:

- Map which of your current API endpoints are being called by AI agents

- Evaluate MCP server implementations: Anthropic’s reference implementation, LangChain MCP adapters, or custom builds

- Pilot a hybrid architecture for one internal AI agent workflow within the next 30 days

- Assign a clear owner for your MCP layer — this is infrastructure-grade work, not a side project

Frequently Asked Questions (FAQs)

Q1: Can I use an API gateway and an MCP server at the same time?

Yes — and most production AI architectures in 2026 do exactly this. The API gateway handles external, human-facing traffic with mature security tooling, while the MCP server handles internal agent-to-tool communication where context persistence and dynamic capability discovery matter most.

Q2: Is MCP server secure enough for enterprise use?

MCP’s capability-scoped security model is architecturally stronger for AI agent workloads than traditional API gateway perimeter security. Each agent session receives only the tools and resources it is authorised to access. That said, MCP is newer technology — enterprises should layer MCP over existing network controls, not replace them.

Q3: What does it cost to run an MCP server?

Self-hosted MCP servers have no licensing cost — the protocol is open-source. Infrastructure costs depend on your hosting environment. A containerised MCP server on AWS ECS in the US runs approximately $30–$150/month for moderate agent workloads, depending on request volume.

Q4: Does MCP work with all major LLMs?

MCP currently has first-class support in Claude (Anthropic), GPT-4o (OpenAI), Gemini (Google), and several open-source models including LLaMA 3.x. Adoption is accelerating — the MCP specification crossed 10,000 GitHub stars within six months of public release.

Q5: Is API gateway relevant for AI applications at all?

Absolutely. Any AI product with human-facing APIs, third-party integrations, or multi-tenant SaaS architecture still needs an API gateway for authentication, rate limiting, and compliance. The gateway and MCP server serve different consumers in the same stack.

Q6: Will MCP replace REST APIs entirely?

Not in the foreseeable future. REST APIs remain the standard for service-to-service and client-to-server communication. MCP specifically addresses the model-to-tool communication gap — it extends the ecosystem rather than replacing it.

Author Box

Waqas Raza Technical SEO Specialist & Digital Strategist

Waqas Raza is a Technical SEO Specialist and Digital Strategist with a focus on B2B SaaS architecture. He helps scaling SaaS companies build content and infrastructure strategies that convert technical authority into sustainable organic growth. At Vitalora Life, Waqas covers agentic AI, SaaS systems, and the infrastructure decisions that define enterprise AI readiness.

Published on Vitalora Life — Scaling Businesses with Agentic AI & SaaS Insights Category: Systems | Reading Time: ~7 minutes | Last Updated: May 2026